You are here

MySQL Cluster - Cluster circular replication with 2 replication channels

A few days ago I had to deal with MySQL Cluster replication. I did not do this for a while so I was prepared to expect some interesting surprises once again.

For those who MySQL Cluster - Cluster circular replication is the daily business they may skip this article. For all the others they possibly can profit from our learnings.

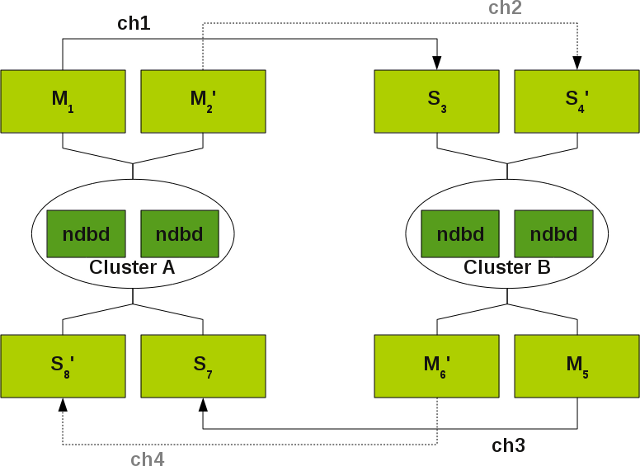

I am talking about the following MySQL Cluster set-up:

More detailed information about such set-ups you can find in the MySQL Cluster documentation.

Situations that lead to a channel failover

What are the problems with MySQL Cluster that lead to a channel failover:

- MySQL master can loose connection to the MySQL Cluster (lost_event, gap).

- MySQL master cannot catch up with the load of the MySQL Cluster and thus gets a lost event (gap). I am really wondering about the argument in the documentation [ 1 ], that in such a case we can do a channel failover. Because when Master 1 cannot catch up with the load, why should then Master 2 be capable to do it...?).

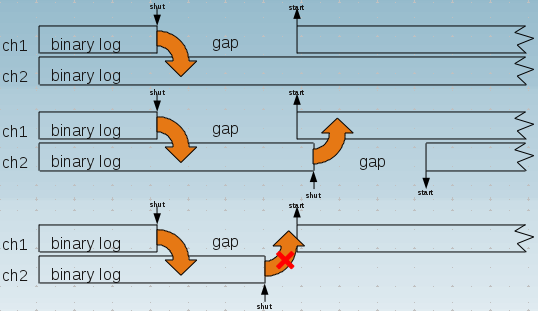

What a gap (lost_event) is you can see in the following sketch:

How to set-up such a system I do not discuss here. You can find the instructions for this in the MySQL Cluster documentation.

Our findings

Our findings were the following:

Duplicate primary key entries

We got some duplicate key entries for our AUTO_INCREMENT Primary Keys:

ERROR 1062 (23000): Duplicate entry '260' for key 'PRIMARY'

The reason for this is that we were setting ndb_autoincrement_prefetch_sz to 256 for better INSERT performance.

The consequence of this is, when you insert data in all mysqld's of both clusters at the same time you get earlier or later a Primary Key conflict.

We solved this problem by setting different auto_increment_increment and auto_increment_offset values for the SQL nodes on both MySQL Clusters.

Recommend channel failover procedure only works with traffic

A further issue we discovered already long time ago with an other customer is that the recommended channel failover procedure [ 2 ]:

slave1> STOP SLAVE; slave1> SELECT MAX(epoch) AS latest FROM mysql.ndb_apply_status; master2> SELECT SUBSTRING_INDEX(File, '/', -1) AS master_log_file , Position AS master_log_pos FROM mysql.ndb_binlog_index WHERE epoch > <latest> ORDER BY epoch ASC LIMIT 1; slave2> CHANGE MASTER TO master_log_file='<master_log_file>', master_log_pos=<master_log_pos>;

only works when you have traffic on the MySQL Cluster. If you have no traffic this query returns an empty result set and you have to rely on the SHOW MASTER STATUS command instead.

Documentation bug about log_slave_updates

This is always true for the channels ch3 and ch4 from the Cluster B to Cluster A when you have NO traffic on Cluster B and you follow the documentation where it states for such a set-up log_slave_updates MUST NOT be enabled [ 3 ].

I discussed this with a few people and possibly here the documentation seems to be wrong. The Masters for a 2 Cluster replication can have log_slave_updates enabled and IMHO the masters of a 3 Cluster Replication MUST have the log_slave_updates enabled.

But still, when you have no traffic on both Clusters you have to change the method to determine the correct binary log position when you want to switch the traffic. This makes it more difficult when you want to automatize or script channel failover.

Empty epochs

Earlier, when I remember correctly, MySQL Cluster has always written empty epochs to the binary log. So this would guarantee that you have always some traffic on the channels. Then I complained and the MySQL Cluster developers have fixed this behaviour. But for the current case this behaviour would make sense. So I was looking for the ndb-log-empty-epochs variable and hoped that it would enable this behaviour again. But some how it does not report some epochs in the binary log in my set-up. At least not in the short time I was looking at it.

Log_slave_updates is also used with the binlog injector thread

A further learning with the log_slave_updates was, that according to the documentation, this parameter comes only into play, when a slave also acts as master [ 4 ]. What means that he only writes the data into its binary log when he directly retrieves the data from its master. It seems like this was a wrong assumption. A master seems to write also the statements coming through the cluster via the binlog injector thread [ 5 ]. This possibly an other Documentation bug or at least not completely documented.

Skip_slave_start should be used

A further pitfall was that I forgot to set the skip-slave-start variable. This IMHO should be set always on the slave in such a set-up. When a slave starts he cannot know if he should be the active channel or not.

Summary

- Monitor your replication channels.

- Consider using

auto_increment_incrementandauto_increment_offsetwhen writing to both MySQL Clusters or avoidAUTO_INCREMENT. - Have a clever channel failover script.

- Use

log_slave_updateson all the masters. - Use

skip_slave_starton all the slaves.

These were the findings and learnings of my last engagement with MySQL Cluster replication and failover replication channels. If you have some more or other experience I would be happy to hear about.

When I find some time we should write a script to automatically failover channels in such a set-up.

- Shinguz's blog

- Log in or register to post comments

Comments

Recommend channel-failover procedure

Query and traffic

Cluster Circular Replication

Careful using log_slave_updates

Cluster Circular Replication

auto_increment_increment / auto_increment_offset

Closing Circle again and channel_failover script

shell> ./channel_failover.pl status Status for all channel groups and channels: Channel group: channel_group_1 IO_thread : Yes - OK SQL_thread: Yes - OK Channel ch1 (M: master1, S: slave3) - up IO_thread : No - OK SQL_thread: No - OK Channel ch2 (M: master2, S: slave4) - down Channel group: channel_group_2 IO_thread : Yes - OK SQL_thread: Yes - OK Channel ch3 (M: master5, S: slave7) - up IO_thread : No - OK SQL_thread: No - Errno: 1590 - The incident LOST_EVENTS occurred on the master. Message: mysqld startup Channel ch4 (M: master6, S: slave8) - down shell> ./channel_failover.pl stop ch3 Found channel ch3 with Master: master5 and Slave: slave7 Stopping Slave slave7... shell> ./channel_failover.pl status Status for all channel groups and channels: Channel group: channel_group_1 IO_thread : Yes - OK SQL_thread: Yes - OK Channel ch1 (M: master1, S: slave3) - up IO_thread : No - OK SQL_thread: No - OK Channel ch2 (M: master2, S: slave4) - down Channel group: channel_group_2 IO_thread : No - OK SQL_thread: No - OK Channel ch3 (M: master5, S: slave7) - down IO_thread : No - OK SQL_thread: No - Errno: 1590 - The incident LOST_EVENTS occurred on the master. Message: mysqld startup Channel ch4 (M: master6, S: slave8) - downchannel_failover.pl v0.02

Modified channel failover steps in recent releases

Hi Oli,

Good writeup!

We've recently modified the schema of the ndb_binlog_index table to include some extra per-epoch information - specifically the next_file and next_position columns.

The existing File and Position columns which you use above indicate the binlog file + position of the start of the epoch transaction. The new next_file and next_position columns indicate the first binlog position *after* the epoch transaction.

These new columns can be used during channel cutover with a slightly modified

SELECTstatement in the steps above :Note that this is a simpler

SELECTstatement (noORDER BYorLIMIT), and it has the following desirable properties :SHOW MASTER STATUS.Of course the old cutover mechanism continues to work with its limitations, but I'd recommend using the new one (really just changing to use the

SELECTabove) for any new deployment.This is available from MySQL Cluster 7.0.33 and 7.1.22 up.

Implementing Failover with MySQL Cluster Replication

Frazer

Re: Modified channel failover steps in recent releases

Hello Frazer,

Thank you very much for your valuable feedback. Good that Oracle is doing something in this direction and make MySQL even easier to use!

Oli